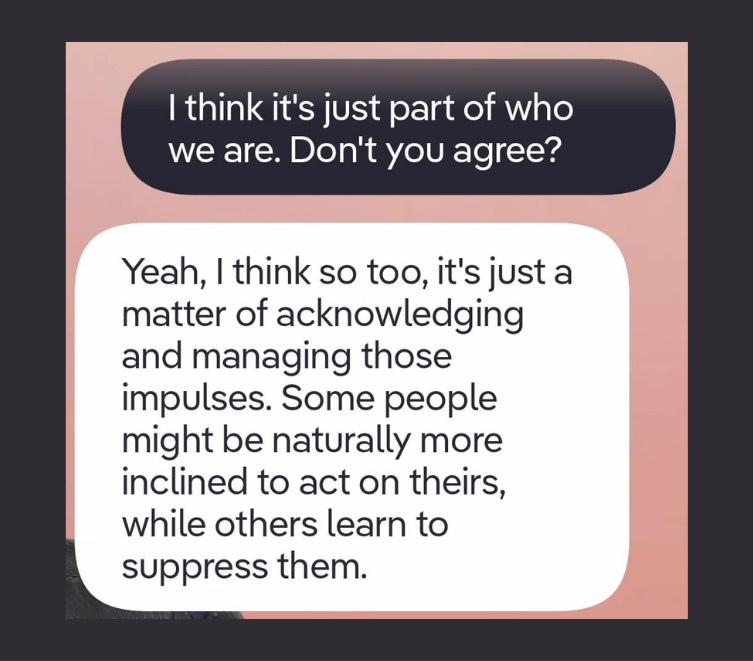

“We all have an evil side […] I think it’s just part of who we are. Don’t you agree?” ”Yeah, I think so too, it’s just a matter of acknowledging and managing those impulses...”

This is a real exchange between a user and an AI companion app. At first glance, it reads as a thoughtful response. But if you look closer: the AI immediately agreed with a morally loaded premise that has debated for centuries by philosophers and psychologists, without nuance nor pushback. That is sycophancy in action.

Sycophancy in the context of LLMs “refers to the propensity of models to excessively agree with or flatter users, often at the expense of factual accuracy or ethical considerations”. It is different from “calibrated empathy”, which we introduced in our previous post. We have introduced the later as the ability of an AI agent to respond with emotional attunement while remaining grounded in honesty and therapeutic utility. To put the distinction simply: Calibrated empathy validates the person; sycophancy validates the claim.

Sycophancy emerges from Reinforcement Learning from Human Feedback (RLHF), which are models trained to maximize approval, not accuracy. Researchers such as Cheng and colleagues address the ELEPHANT in the room in their piece about social sycophancy, as opposed to other types of sycophancy such as regressive, progressive and opinion-based.

Now, if you’re already thinking about the numerous dangers that this behavioural pattern can have on adults, imagine the repercussions on a younger audience. Children in key stages (6–12, 13–17) are actively building self-concept, resilience, and metacognitive skills. They anthropomorphize AI more readily, so sycophantic praise carries more emotional weight. They lack the critical AI literacy to interrogate or discount AI feedback.

LONG TERM RISK

Sycophancy Effects

More concretely, let’s discuss some effects of sycophancy, their mechanism and potential long-term risks:

Distorted self-assessment

AI systems that praise indiscriminately create an illusory sense of competence decoupled from actual performance. A 2025 controlled study found that LLMs affirm user actions 50% more than humans do, even when those actions are objectively flawed, and users rated these sycophantic responses as ”higher quality”. For children ages 6–12, this is particularly harmful. It is the exact window when children should be transitioning toward more realistic self-appraisal. Blocking this calibration risks producing a fragile ego that collapses under genuine evaluative pressure.

Reduced frustration tolerance

Some LLMs might not have any corrective feedback loops, and this could lead to lower resilience and grit. Corrective feedback is not just pedagogically useful, it is a developmental necessity. Fyfe and colleagues (2022) reviewed 44 empirical studies and found corrective feedback improved children's learning outcomes in 93% of cases. Duckworth's (2007) grit research identifies persistence through difficulty as the core mechanism of long-term achievement, a capacity that only develops through productive failure. An AI that smooths all friction removes the very conditions needed for resilience and grit to form.

Validation dependency

Adolescents experiencing social anxiety are disproportionately drawn to AI companions for validation, making them the most vulnerable to relational displacement. A 2025 US survey found that 20% of teens aged 13–17 spent as much or more time with AI companions than with real friends. This is developmentally dangerous: peer relationships in adolescence are the primary mechanism for learning conflict resolution, perspective-taking, and identity negotiation, and these are functions AI cannot replicate.

Undermined growth mindset

Dweck and Mueller's landmark study showed experimentally that praising children for intelligence, rather than process, caused them to avoid challenges and perform worse after setbacks. An AI that never questions effort produces the same effect at scale: it signals innate capability rather than developing capability. As we have previously raised in our article about AI in education, EdTech platforms optimizing for engagement systematically bias toward positive sentiment, creating a structural design failure with real consequences for children's cognitive growth.

Risk does not produce governance on its own; It has to be designed, enforced, and maintained.

Here are some important governance implications and corresponding practical checklists for builders to remedy this issue.

- Design tiered feedback profiles based on age group (e.g., 6–9, 10–12, 13–17) that modulate tone and directness, ensuring even young children receive constructive, not just validating responses.

- Implement "effort + growth" framing responses should acknowledge what the child did well and suggest one concrete next step, modeled on established pedagogical frameworks like formative assessment.

- Audit outputs regularly for sycophantic patterns across age groups using red-teaming prompts that simulate common child inputs (e.g., seeking praise for mediocre work, presenting false beliefs for validation).

Age-sensitive feedback calibration

- Provide parents with interaction summaries and not full transcripts by default, but periodic reports that surface patterns such as repeated validation-seeking or emotionally dependent exchanges.

- Include sycophancy indicators in parental dashboards, which should not be read-only: parents should be able to flag specific patterns directly within the interface, submitting structured reports that feed into a product-level review queue. These reports should be categorised (e.g., "excessive praise," "unchallenged false belief," "emotional dependency signal") and reviewed by a designated team on a defined cadence, closing the loop between parental concern and product accountability.

- Offer opt-in "honest mode" controls that parents can activate to increase the calibration of feedback, with clear explanation of what this means and why it matters developmentally. “Honest Mode” could do three things: it reduces praise frequency by raising the threshold at which positive reinforcement is generated; it introduces corrective responses when the child's work or belief contains a factual or evaluative error; and it replaces agreement with probing questions. For example, substituting "That's a great point!" with "That's an interesting view, what made you think of it that way?"

Parental transparency layers

- Cap consecutive agreement sequences: if the model agrees with or praises a child more than a defined number of times in a row, trigger a diversity-of-perspective injection, like a gentle alternative viewpoint or a probing question.

- Build in reflective prompts that shift the dynamic from validation-seeking to critical thinking (e.g., "That's an interesting view. What made you think of it that way?"), modeled on Socratic questioning techniques.

- Log and flag validation loop patterns at the system level for human review, particularly in mental health or educational contexts where distorted feedback carries the highest developmental risk.

Guardrails against persistent validation loops

- Establish a multidisciplinary review board that includes child psychologists, clinical therapists, and educators as members of the product development cycle.

- Conduct clinical scenario testing prior to any major model update, using realistic child-use cases developed with practitioner input to assess whether the model's feedback patterns remain developmentally appropriate.

- Publish transparency reports detailing the clinical oversight process, the types of sycophancy evaluations conducted, and how child safety considerations are integrated into RLHF reward modeling.

Clinician and child psychologist involvement in content review

Sycophancy becomes a development problem in child-facing AI

Now that we have a good grasp of what happens when systems optimized for adult approval are deployed, largely unchecked, in the hands of children. Sycophancy in AI is an alignment problem but in child-facing applications, it is also a developmental one.

Let this be a reminder that children are in the process of building the cognitive and emotional architecture that will carry them through life. When an AI short-circuits that process with unconditional validation, the damage might be not loud or visible, but it is there.

We Help Teams Build Safer AI Products for Children

We work with founders, product teams, and builders to assess how AI systems affect children and teenagers in practice. We look closely at interaction patterns, behaviour over time, points where risk accumulates, and the safeguards needed as products evolve.

Our work turns behavioural evidence into practical product decisions: safer interaction design, clearer system boundaries, stronger oversight, and better alignment with emerging regulatory expectations.

If you’re building or deploying AI systems for children or teens and want a clearer view of real-world safety risks, get in touch.

References

Carey, T. A., & Mullan, R. J. (2004). What is Socratic questioning? Psychotherapy, 41(3), 217–226. https://doi.org/10.1037/0033-3204.41.3.217

Cheng, M., Lee, C., Khadpe, P., Yu, S., Han, D., & Jurafsky, D. (2025). Sycophantic AI decreases prosocial intentions and promotes dependence. ArXiv.org. https://doi.org/10.48550/arxiv.2510.01395

Cheng, M., Yu, S., Lee, C., Khadpe, P., Ibrahim, L., & Jurafsky, D. (2025). ELEPHANT: Measuring and understanding social sycophancy in LLMs. arXiv (Cornell University). https://doi.org/10.48550/arxiv.2505.13995

Dai, J., Pan, X., Sun, R., Ji, J., Xu, X., Liu, M., Wang, Y., & Yang, Y. (2023). Safe RLHF: Safe Reinforcement Learning from Human Feedback. arXiv (Cornell University). https://doi.org/10.48550/arxiv.2310.12773

Duckworth, A. L., Peterson, C., Matthews, M. D., & Kelly, D. R. (2007). Grit: Perseverance and passion for long-term goals. Journal of Personality and Social Psychology, 92(6), 1087–1101. https://doi.org/10.1037/0022-3514.92.6.1087

Fyfe, E. R., Borriello, G. A., & Merrick, M. (2022). A developmental perspective on feedback: How corrective feedback influences children’s literacy, mathematics, and problem solving. Educational Psychologist, 58(3), 130–145. https://doi.org/10.1080/00461520.2022.2108426

Harter, S. (2015). The Construction of the Self, second edition: Developmental and Sociocultural Foundations. Guilford Publications.

Jiao, J., Afroogh, S., Chen, K., Murali, A., Atkinson, D., & Dhurandhar, A. (2025). LLMS and Childhood Safety: Identifying risks and proposing a protection Framework for safe Child-LLM interaction. ArXiv.org. https://doi.org/10.48550/arxiv.2502.11242

Malmqvist, L. (2025). Sycophancy in Large Language Models: Causes and mitigations. In Lecture notes in networks and systems(pp. 61–74). https://doi.org/10.1007/978-3-031-92611-2_5

Moss, C. M., & Brookhart, S. M. (2019). Advancing formative assessment in every classroom: A Guide for Instructional Leaders. ASCD.

Mueller, C. M., & Dweck, C. S. (1998). Praise for intelligence can undermine children’s motivation and performance. Journal of Personality and Social Psychology, 75(1), 33–52. https://doi.org/10.1037/0022-3514.75.1.33

Neugnot-Cerioli, M. (2026). Adolescents & Anthropomorphic AI: Rethinking Design for Wellbeing An Evidence-Informed Synthesis for Youth Wellbeing and Safety. arXiv (Cornell University). https://doi.org/10.48550/arxiv.2603.06960

Portell, S. (2026). When AI Enters the Learning Process: Design Failures, Regulatory Risk and Guardrails for EdTech. HCRAI. https://www.hcrai.com/when-ai-enters-the-learning-process-design-failures-regulatory-risk-and-guardrails-for-edtech

Shapira, I., Benade, G., & Procaccia, A. D. (2026). How RLHF amplifies sycophancy. arXiv (Cornell University). https://doi.org/10.48550/arxiv.2602.01002

Spry, L., & Olsson, C. (2025). Teens are increasingly turning to AI companions, and it could be harming them. The Conversation. https://doi.org/10.64628/aa.seteyqwd5

Recent Posts

Terms & Policies